Lambda Container Image Support (example with Custom Runtime)

Amazon Web Services announced Lambda Container Image Support during Andy Jassy's AWS re:Invent 2020 keynote. It's an exciting new feature to AWS Lambda and one that quite many people have dreamed of, including myself! So after the keynote I decided to play around with the new feature a bit - even though it was quite late in my local time (and I probably should've gone to bed)! Instead, I kept tinkering with it and created a container based Lambda function that executes a shell script using Amazon Linux 2 based custom runtime.

Containers… in Lambda? ¶

What this Container Image Support really means? In short, it means that now you can package & deploy your Lambda functions as container images:

- Create a

Dockerfile - Use one of the AWS provided base images (in

FROMsection) - Define your containerized environment with all the dependencies

- Point

CMDinto your function handler - Build the image (

docker build) - Push it to AWS Elastic Container Registry (

docker push) - Use the ECR image as Lambda function code source!

Also the maximum size of the containerized Lambda image can be upto 10GB which should help especially with machine learning and data intensive scenarios!

Some people have asked that will this Container Image Support "kill" AWS Fargate... and the answer is: No, it will not kill Fargate! Lambda is still about responding to events (with short-lived function executions) whereas Fargate is about long-running services.

Defining a custom runtime ¶

The AWS official example from the News Blog showcased two container image based Lambda functions: One using NodeJS and another using Python. I wanted to try something else, so I ended up playing around with custom AWS Lambda runtimes that were originally introduced in 2018 re:Invent and created a shell script that acts as the Lambda function code.

Before we get to actually set up the container, we must define the custom runtime. A custom runtime for Lambda requires at least two executables:

| Filename | Path | Purpose |

|---|---|---|

bootstrap |

/var/runtime/ |

a program that interacts between Lambda service and your function code |

| {anything} | /var/task/ |

the actual function code |

Since I was using shell scripts for the Lambda runtime logic, I named the function code executable as function.sh. For the custom runtime setup, I am pretty much following the example from AWS Tutorial “Publishing a custom runtime” (with minor edits).

bootstrap#!/bin/sh

set -euo pipefail

# NOTE:

# $_HANDLER is set by AWS Lambda based on what CMD value

# we give in the Dockerfile (shown later)

# Initialization - load function handler

source $LAMBDA_TASK_ROOT/"$(echo $_HANDLER | cut -d. -f1).sh"

# Processing

while true

do

HEADERS="$(mktemp)"

# Get an event. The HTTP request will block until one is received

EVENT_DATA=$(curl -sS -LD "$HEADERS" -X GET "http://${AWS_LAMBDA_RUNTIME_API}/2018-06-01/runtime/invocation/next")

# Extract request ID by scraping response headers received above

REQUEST_ID=$(grep -Fi Lambda-Runtime-Aws-Request-Id "$HEADERS" | tr -d '[:space:]' | cut -d: -f2)

# Run the handler function from the script and pass in the event data as argument

# IMPORTANT NOTE: -f3 gets the "handler" part from function.sh.handler

RESPONSE=$($(echo "$_HANDLER" | cut -d. -f3) "$EVENT_DATA")

# Send the response

curl -X POST "http://${AWS_LAMBDA_RUNTIME_API}/2018-06-01/runtime/invocation/$REQUEST_ID/response" -d "$RESPONSE"

donefunction.sh# Handler function name must match the

# last part of <fileName>.<handlerName>

function handler () {

# Get the data

EVENT_DATA=$1

# Log the event to stderr

echo "$EVENT_DATA" 1>&2;

# Respond to Lambda service by echoing the received data back

RESPONSE="Echoing request: '$EVENT_DATA'"

echo $RESPONSE

}Container for our custom runtime ¶

Now the interesting part begins! AWS have released a set of container base images for various Lambda runtimes. Since we're building a custom runtime, we'll want to choose the one with newest Amazon Linux which is provided.al2. You can view the image Dockerfiles in aws/aws-lambda-base-images Github-repository, where each runtime is within their own branch, but they're not that interesting since they mainly contain tarballs.

You can pull the base images either from DockerHub with amazon/aws-lambda-provided or from AWS ECR Public with public.ecr.aws/lambda/provided.

I'll be using the DockerHub one in this example. My Dockerfile will be quite simple, it's just to showcase the basics:

Amazon Linux 2# First we pull the base image from DockerHub

FROM amazon/aws-lambda-provided:al2

# Copy our bootstrap and make it executable

WORKDIR /var/runtime/

COPY bootstrap bootstrap

RUN chmod 755 bootstrap

# Copy our function code and make it executable

WORKDIR /var/task/

COPY function.sh function.sh

RUN chmod 755 function.sh

# Set the handler

# by convention <fileName>.<handlerName>

CMD [ "function.sh.handler" ]Let's test it locally ¶

Execute the following commands to first build the container and then run it with Docker:

docker build -t lambda-container-demo .

docker run -p 9000:8080 lambda-container-demo:latestOnce you have it running, open another terminal window and execute:

curl -XPOST \

"http://localhost:9000/2015-03-31/functions/function/invocations" \

-d 'Hello from Container Lambda!'After which you should see the Lambda response “Echoing request: 'Hello from Container Lambda!'%” in your terminal window where you executed the curl command and in your previous terminal window where the Docker is running you should see some debug messages.

time="2020-12-01T21:42:50.024" level=info msg="exec '/var/runtime/bootstrap' (cwd=/var/task, handler=)"

time="2020-12-01T21:42:52.378" level=info msg="extensionsDisabledByLayer(/opt/disable-extensions-jwigqn8j) -> stat /opt/disable-extensions-jwigqn8j: no such file or directory"

time="2020-12-01T21:42:52.378" level=warning msg="Cannot list external agents" error="open /opt/extensions: no such file or directory"

START RequestId: d1eee919-e61f-4a37-a2ce-345a89c31b82 Version: $LATEST

Hello from Container Lambda!

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 63 100 16 100 47 8000 23500 --:--:-- --:--:-- --:--:-- 63000

{"status":"OK"}

END RequestId: d1eee919-e61f-4a37-a2ce-345a89c31b82

REPORT RequestId: d1eee919-e61f-4a37-a2ce-345a89c31b82 Init Duration: 0.34 ms Duration: 46.04 ms Billed Duration: 100 ms Memory Size: 3008 MB Max Memory Used: 3008 MBPublish, part 1: ECR ¶

Before we can create a new Lambda function with this container, we need to publish the container image to Amazon Elastic Container Registry - or ECR for short.

# Build the image

docker build -t lambda-container-demo .

# Create repository

aws ecr create-repository --repository-name lambda-container-demo --image-scanning-configuration scanOnPush=true

# Tag it

docker tag lambda-container-demo:latest 123412341234.dkr.ecr.eu-west-1.amazonaws.com/lambda-container-demo:latest

# Login

aws --region eu-west-1 ecr get-login-password | docker login --username AWS --password-stdin 123412341234.dkr.ecr.eu-west-1.amazonaws.com

# Push the image

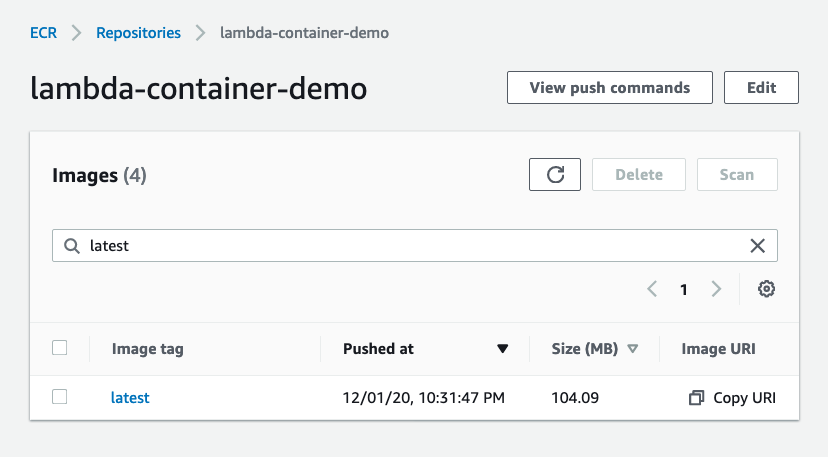

docker push 123412341234.dkr.ecr.eu-west-1.amazonaws.com/lambda-container-demo:latest123412341234 with your AWS Account ID and replace all instances of eu-west-1 with your preferred AWS region.Now you should see the published image in your ECR repo!

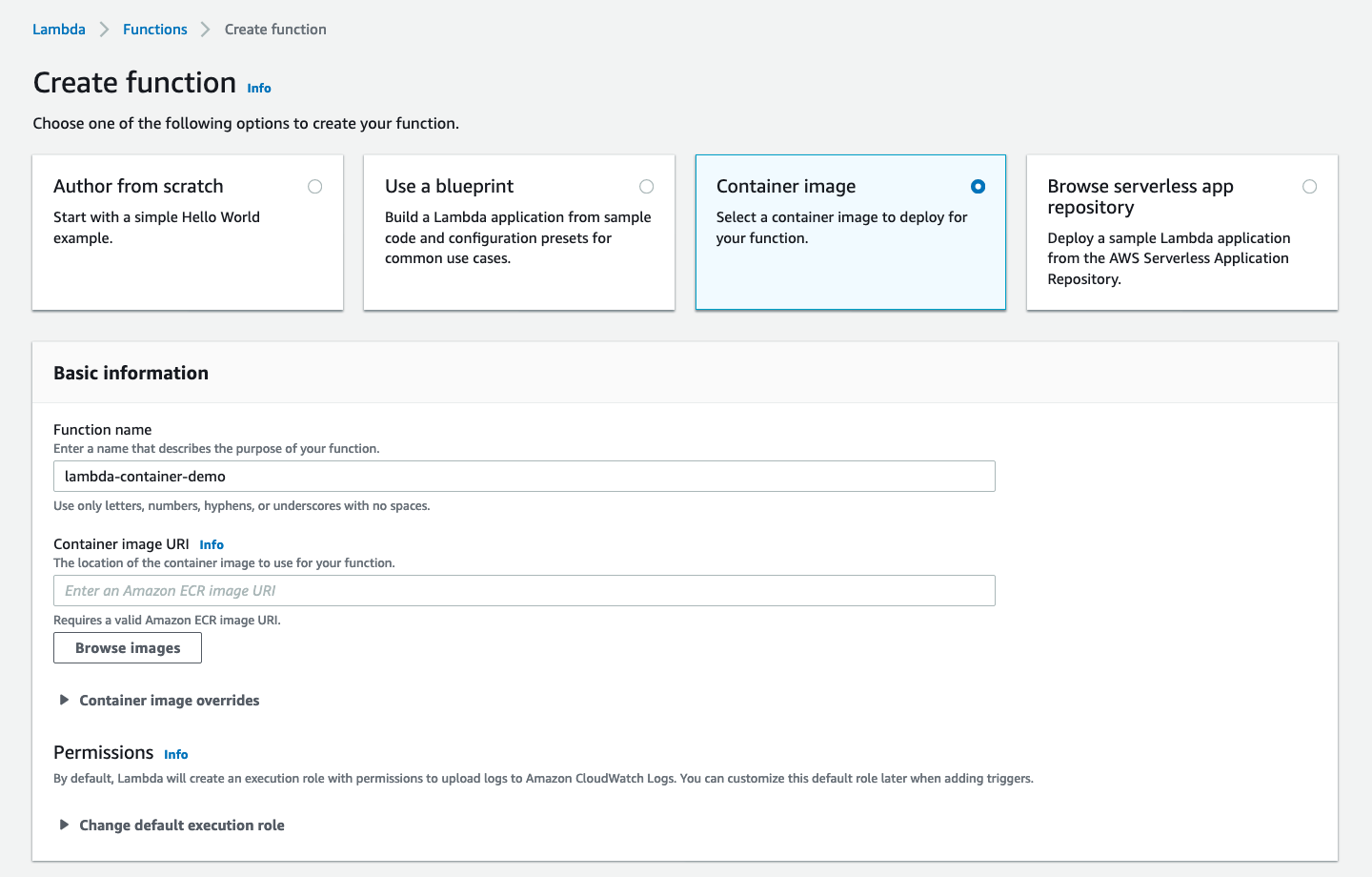

Publish, part 2: Lambda ¶

Since the Lambda Container Image support is just released, it doesn't yet seem to have CloudFormation support, we'll have to create the Lambda function manually via AWS Web Console UI (which makes sense since AWS probably wants people to first play around with the feature in non-prod environments).~

Update 2020-12-01T23:28:40Z:

It seems that CDK already added support to deploy Container Image based Lambda functions in v1.76.0. Originally I investigated the Lambda function CloudFormation AWS::Lambda::Function resource & its Code property and did not spot any way of using ECR container image as code source. I haven't yet had the change to investigate more, but at a quick glance it seems to indicate you might be able to set ImageUri property on Lambda function resource; Which at the time of writing this did not exist on CloudFormation resource specification - which could be explained by it being such a new feature that the public spec hasn't yet been updated. 🤷♂️

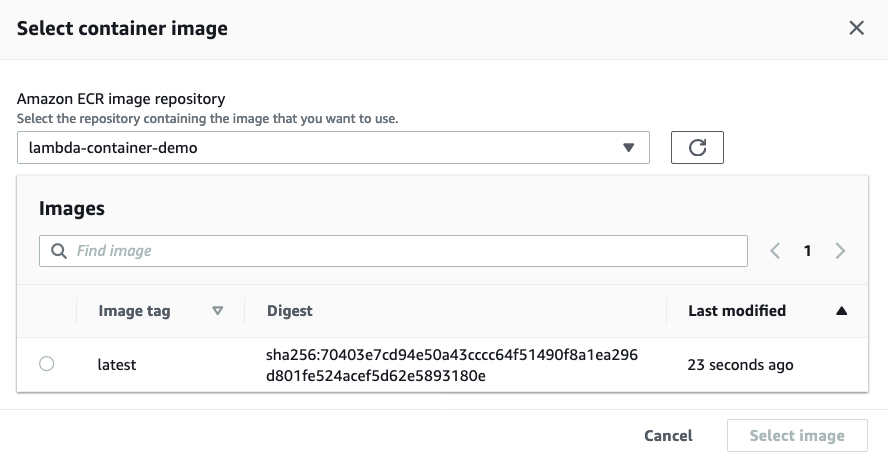

Then select “Browse images” and choose the image we pushed to ECR above:

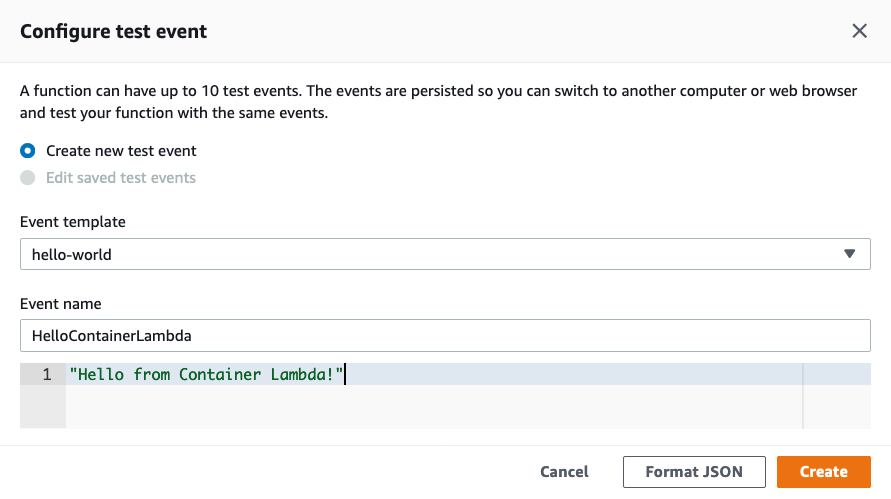

Once Lambda service is done “Creating the function lambda-container-demo”, we can configure a test event, let's use the same “Hello from Container Lambda!” we used with local testing previously:

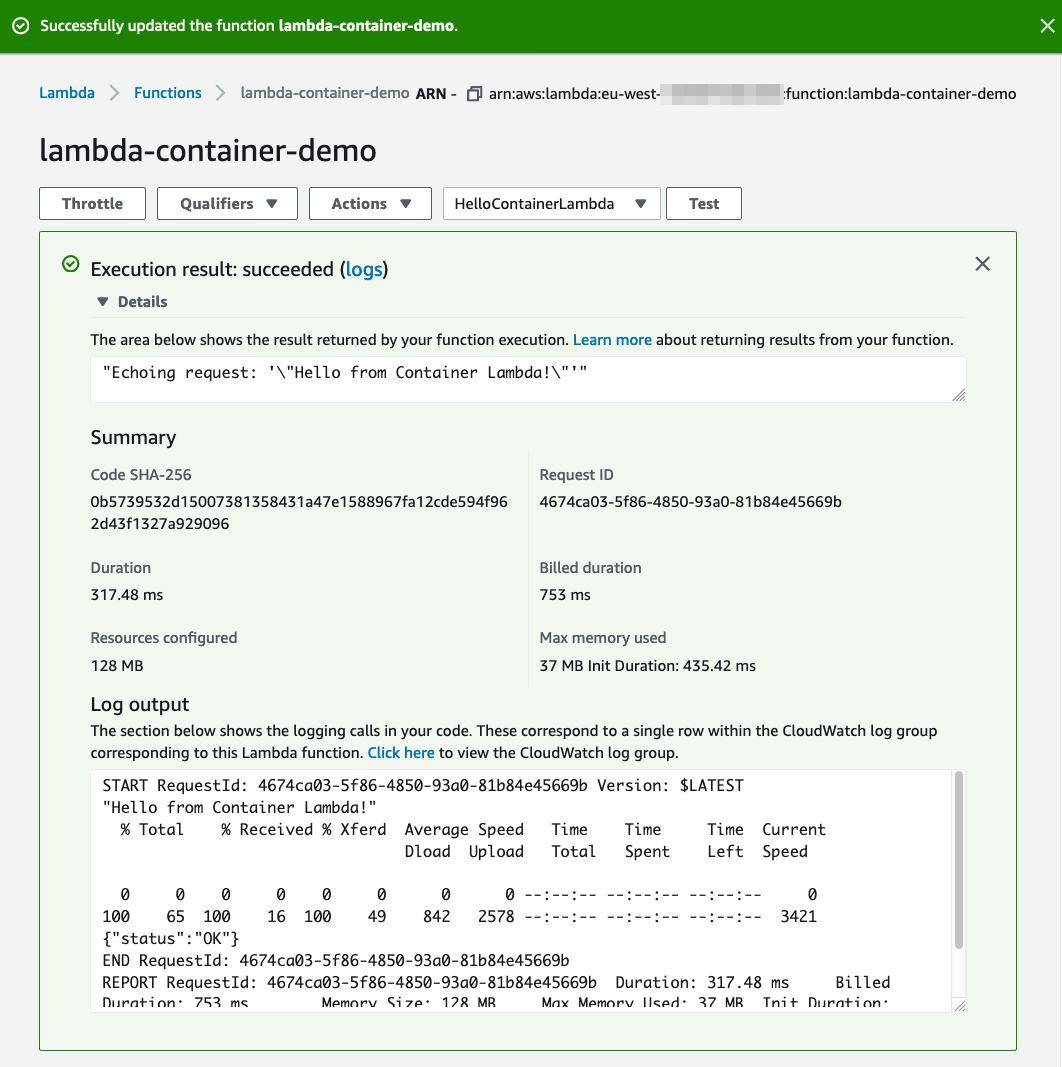

Once you've configured the test event, it's the moment of truth: Click Test! If all goes well, you should see something like this:

Summary ¶

In the end, this demonstration was somewhat simple as it didn't really showcase any interesting possibilities of using Lambda Container Images. I wanted to keep this blog post relatively short and also keep in mind that I haven't had much time to play around the container image support myself! 😅 But I still wanted to provide you with a minimal setup on how to get started with Lambda Container Images and Custom Runtimes.

With Lambda Container Image Support you could do many interesting things such as packaging complex dependencies using Dockerfile or using 3rd party images as part of your Docker build. One of the biggest benefits of using Containers with Lambda is that you can deploy Lambda functions up to 10GB in size, which should help especially with machine learning and data intensive scenarios.

Also I think these “Lambda containers” might be suitable solution for some small legacy workloads: Think of a scenario where you have an application that is rarely called, you don't have the resources (or interest) to refactor/rewrite it, but you also don't like the idea of having a long-running process (e.g. in ECS/Fargate/EC2) serving it; Depending on the workload, you might be able to wrap it as a “Lambda container” - just a thought!

One interesting thing to investigate in the future is the effect on Lambda performance: For example how cold start scenarios with Lambda functions defined with container images compare to “regular” functions.

Hopefully this article shed some light into Lambda with Container Image Support and got you exited to start building with it!

The example code can also be found from aripalo/lambda-container-image-with-custom-runtime-example Github-repository.